Bank of England Launches AI Risk Scenario Tests, Warns of Algorithmic 'Herding Effect' on Financial System

In a letter submitted to lawmakers on Thursday, the Bank of England stated that it is conducting scenario analysis and simulation tests to assess the risks that artificial intelligence (AI) may pose t

In a letter submitted to lawmakers on Thursday, the Bank of England stated that it is conducting scenario analysis and simulation tests to assess the risks that artificial intelligence (AI) may pose to the financial system.

The central bank pushed back against a parliamentary Treasury Committee assessment that it had taken a "wait-and-see" approach to AI risks, noting that it is continuously analyzing how AI investment and adoption are reshaping the structure of the financial system.

The bank also indicated that it is working with international counterparts to examine how AI agents could transform financial market trading patterns. Sarah Breeden, Deputy Governor responsible for financial stability, noted that the testing will focus on the so-called "herding effect" — where investors or algorithms converge on similar actions during periods of market stress, thereby amplifying the risk of market sell-offs.

Following Anthropic's release of its Mythos product last week, concerns about AI risks within the financial system have intensified. The product can identify serious software vulnerabilities that are nearly undetectable by humans.

According to reports, the US Department of Commerce's AI Standards and Innovation Center, along with other government officials, have been quietly evaluating the capabilities of Anthropic's new generation model to identify potential risks and opportunities. Experts noted that the product's powerful programming capabilities could provide new avenues for discovering and exploiting cybersecurity vulnerabilities.

A Growing Threat

Bank of England Governor Andrew Bailey remarked that Anthropic may have "found a way into the entire world of cyber risk." Bailey pointed out that global regulators need to rapidly assess the potential threats posed by the Mythos model. "The question is, to what extent this new product can identify vulnerabilities in other systems and be used to carry out cyber attacks."

Meanwhile, the Treasury Committee criticized the UK Treasury for failing to commit to bringing major AI and cloud service companies under the "Critical Third Party Regulatory Framework" by the end of 2026. This framework is designed to regulate infrastructure providers deemed essential to the financial system.

Meg Hillier, Chair of the Treasury Committee and a member of the governing Labour Party, expressed mixed feelings: "I am glad to see the Bank of England beginning to address this issue to some degree, but the Treasury's slow pace remains perplexing." She further noted that "the powers granted by the Critical Third Party framework have not yet been meaningfully deployed, and we remain in a position of potential vulnerability. I genuinely cannot understand why progress has been so slow. We will continue to closely monitor this situation."

Lucy Rigby, Treasury Minister, told the committee that the government expects to make its first batch of critical third-party designations this year, but will not disclose the specific companies under consideration to ensure procedural fairness.

In an April 1st statement, the Bank of England's Financial Policy Committee indicated that financial institutions have not yet deployed advanced AI (such as AI agents with autonomous decision-making capabilities) in ways that could trigger systemic risks. However, it warned that as the financial sector accelerates AI adoption, related risks could rise rapidly.

Related Articles

Communiqué of the Fourth Plenary Session of the 20th Central Committee of the Communist Party of China

HKMA Grants First Stablecoin Licenses to HSBC, Anchor — What It Means for HKD and Digital Finance

China State Council Sets Target: Service Sector Output to Exceed 100 Trillion Yuan by 2030

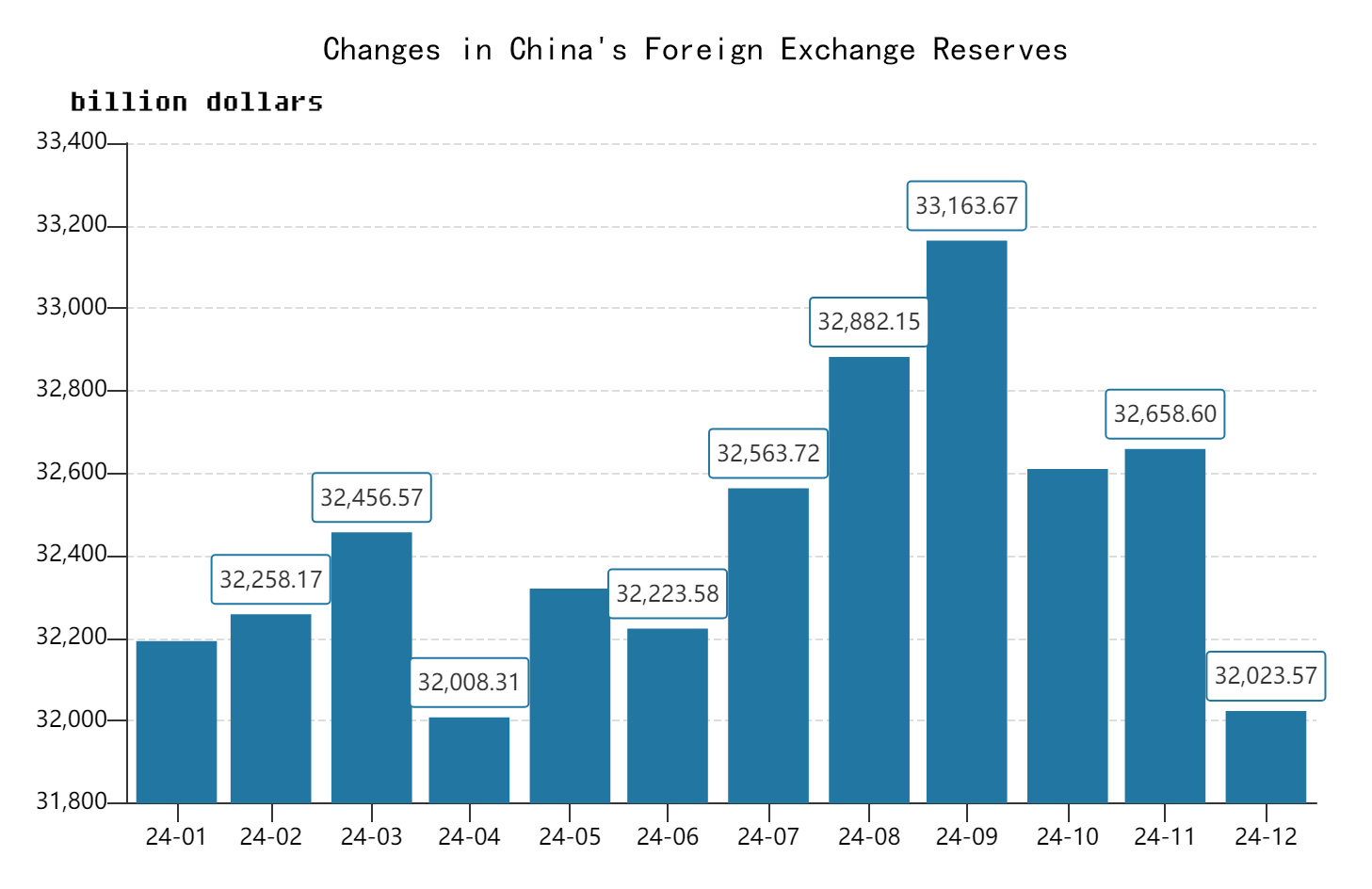

China's foreign exchange reserves stood at $3.2 trillion in 2024, equivalent to RMB 23 trillion.

PBoC Ends Tight Operations: 600 Billion Yuan MLF on May 25 as Late-Month Liquidity Pressures Mount